DataOps Principle #16 - Monitor Quality and Performance

Over the past year I’ve been leveraging Application Insights on my blog to monitor traffic and issues. Surprisingly, Part 2 continues to get regular traffic and the template I provided to help monitor Power BI refreshes receives regular traffic as well. While it’s exciting that people are interested in making sure their Power BI datasets refreshes are healthy and thus embracing “Monitoring for Quality and Performance” within DataOps, I knew the template I offered could be better.

And why is that? Well, I’m glad you asked. Here are two reasons why:

1) Complicated Setup – The template I offered required you to setup an application registration and this always presents a risk for complications. It’s been my experience that making sure the application grants admin consent to APIs or asking an Azure administrator to grant admin consent, is a common oversight. In addition, making sure the Resource URL is the correct URL could also be problematic. Power BI Commercial, Government Community Cloud (GCC), GCC-High, and other sovereign clouds all have different Resource URLs. Thus, I wanted to streamline the process to reduce issues during setup.

2) Listening to an Expert – The template was based on querying Power BI’s REST APIs using a OAuth2 token generated within the dataset. This requires you to save a password within the dataset. Now, if you protect access to the dataset, you shouldn’t be worried; however, I knew that this wasn’t the best option. I was further pushed to find a better template when a blog post was published by Chris Webb a month after I published Part 2. In his post it summarized Microsoft’s advisement that if you want to connect from Power BI to a REST API that uses OAuth2 authentication (and that’s what I was doing in the template), then you need to build a custom connector.

This may not be a surprise to you, but there are custom connectors out there that you can pay for, and it enables you to query Power BI’s REST APIs. Kudos to those companies seeing a good business opportunity, but I always felt that you shouldn’t have to pay for a connector that queries Power BI within Power BI. So, I went looking for ways to build an open-source connector.

Building a Custom Connector

Thanks to Microsoft’s training, I was able to understand how to build a connector. Yet, I needed Visual Studio to build a connector, and if I wanted to easily enable others to contribute to this connector, I needed to use Visual Studio Code. Thankfully I noticed in September 2022 that a SDK was being developed by Microsoft to offer that capability in Visual Studio Code. I downloaded the extension and began to build the connector.

After some trial and error, I was able to get a version built. For this connector I’d like to share some of my design considerations:

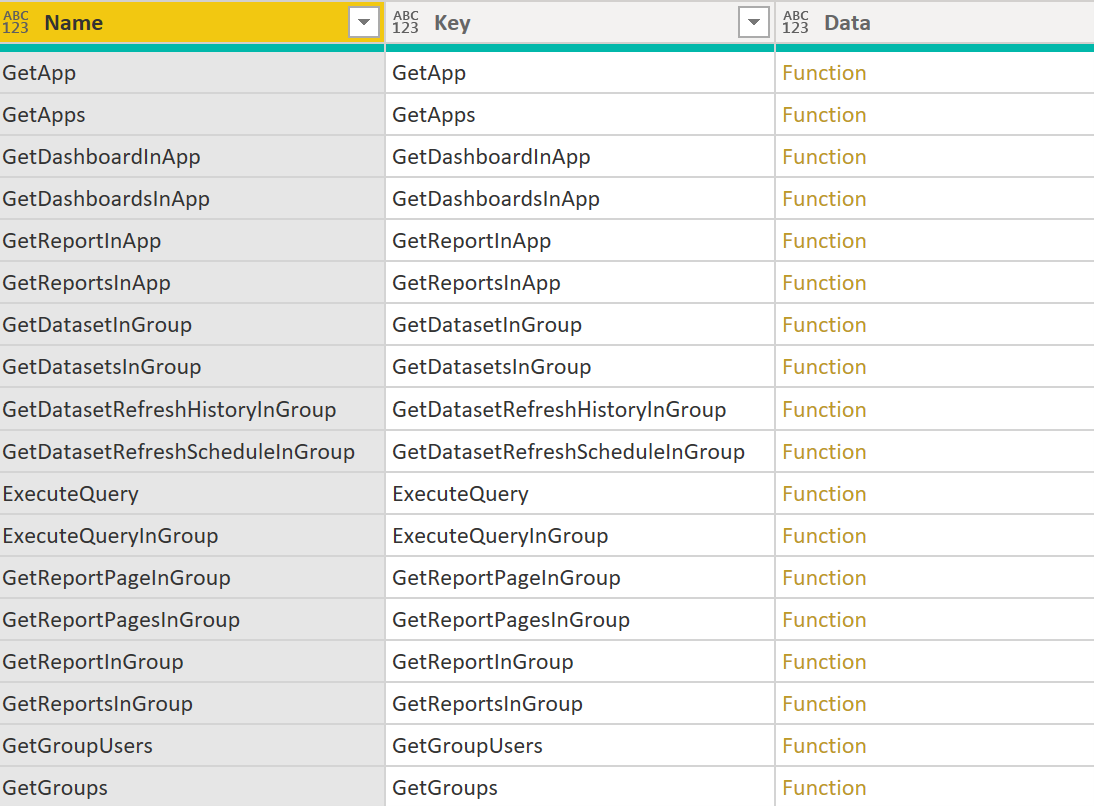

1) A Catalog of Functions – I wanted those using this connector to feel empowered to innovate, so I wanted to make this connector a gateway to many of the read-only endpoints within the Power BI REST APIs. Therefore, I borrowed Kim Burgress’s design pattern I wrote about in Part 13 and, thus, each endpoint is its own Power Query function.

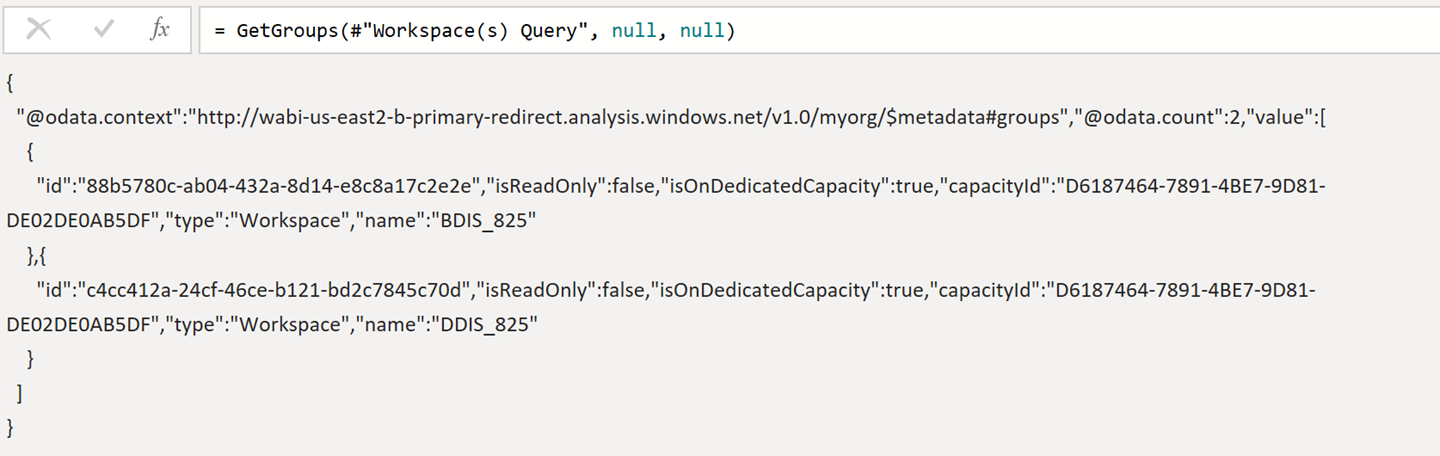

Figure 1– A Catalog of Power Query Functions for Power BI REST APIs

2) Raw JSON – Within the connector I need to make a critical design, when someone calls a Power Query function that hits a Power BI REST endpoint, does that function return a table transforming the JSON data returned from Power BI OR do I just return the raw JSON data and let the Power BI data analyst transform the JSON? I ended up choosing the latter option because I’ve worked in different sovereign clouds and I knew from experience that the Power BI REST APIs and the JSON properties returned by those endpoints differed across the cloud environments. If I made that decision, I would be on the hook to keep track of the changing JSON schemas as Microsoft updates them AND keep track of the differences in those schemas between the cloud environments. I knew this wasn’t feasible given the pace of change from Microsoft and the unpredictable change of bureaucratic instituations. This is a long-winded way of saying the user of this connector will need to use the transform JSON step in Power Query, choose what properties they need, and not have to wait on this custom connector to be updated when a new property appears in the API (you’re welcome… hopefully).

Figure 2 - Raw output from GetGroups so you can choose what JSON properties you need

Since the Resource URLs and API endpoints are different between commercial and sovereign clouds, I did have to make different customer connectors. Not a great option (and I’m open to ideas if this can be consolidated), but it works. As of November 2022, I have published three connectors:

1) Power Query Custom Data Connector for Power BI REST APIs (Commercial)

2) Power Query Custom Data Connector for Power BI REST APIs (Government Community Cloud)

3) Power Query Custom Data Connector for Power BI REST APIs (Government Community Cloud High)

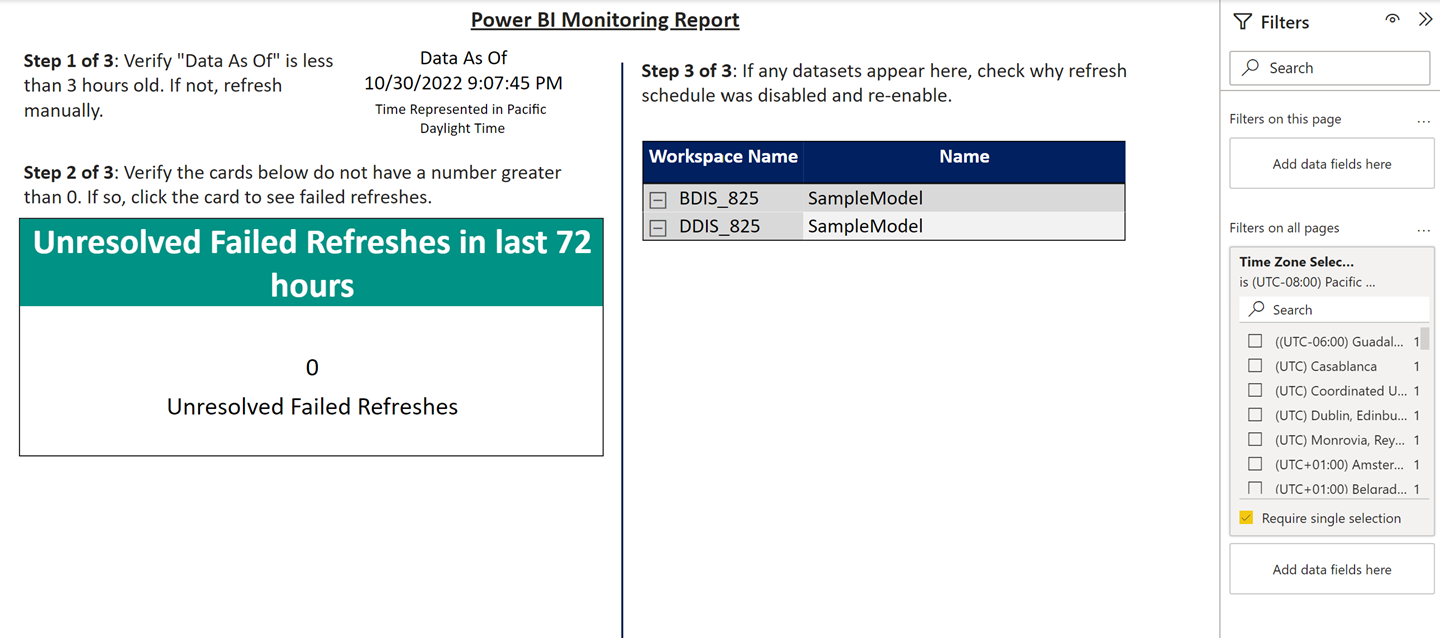

Sharing The New Template

With a new custom connector, the setup for running on your desktop and the instructions to run in a gateway (dataset refreshes with custom connectors require a gateway, sorry) are simplified. Plus, no password in your Power BI file… whew. I’m happy to share a new template on GitHub that uses this connector.

Figure 3 - A new Power BI template to monitor dataset refreshes using the custom connector

This updated template includes:

1) The ability to pull data refresh history and schedules across multiple workspaces.

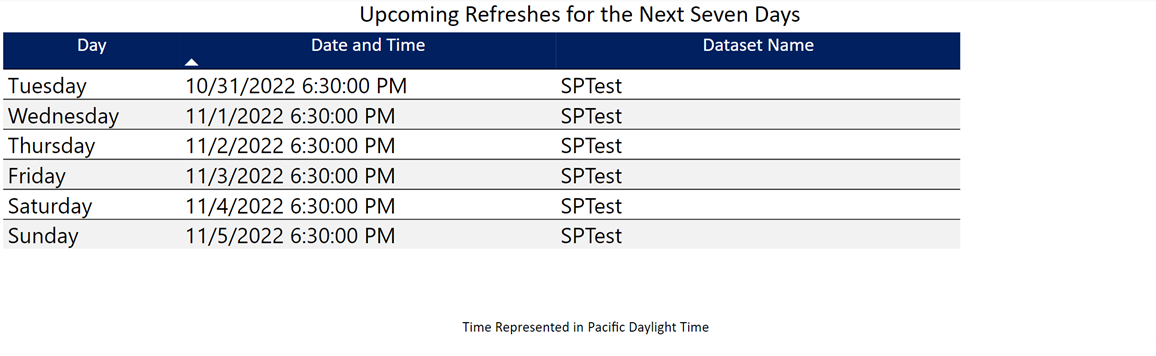

2) The ability to see the refresh schedules for the next seven days. This is very helpful when planning for changes or reviewing maintenance windows.

Figure 4 - Example of the Upcoming Refreshes tab in the template

3) The ability to choose your time zone. The Power BI services lets dataset owners choose which time zone to schedule their refresh times. This can lead to problems interpreting refresh times since the Power BI API returns the schedule for the time zone and not in UTC. This template will convert the time zones to UTC and then let you decide which time zone you want to see in the report. It also supports US Daylights Savings, and I’m open to incorporating other daylight savings rules (like the European Union) but I would need help testing.

Feedback

If you’re interested in testing or contributing to a version of this custom connector, please reach out to me on LinkedIn or Twitter. If you have feedback on the new template for monitoring Power BI Refreshes, please contact me as well.

Finally, if you’re going to be at the PASS Data Community Summit, November 15th-18th 2022, I hope to see you there and see you at my session on Wednesday, November 16th at 4:15 p.m. local time.