DataOps Principle #16 - Monitor for Quality and Performance

Now before I talk about this principle, I would like to provide some context. Most of the time my work is done without the privilege of being a Power BI administrator. The most privilege I get is administrator rights to a Power BI workspace. I have worked in some large enterprise environments and separation of privileges is paramount; thus, our teams do not have access to certain features a Power BI administrator may have that would better serve this principle.

“Our goal is to have performance, security and quality measures that are monitored continuously to detect unexpected variation and generate operational statistics.”

From my point of view the two words “monitored continuously” stand out in this principle and when it comes to Power BI the content that must be frequently checked for issues is the refreshing of the data. Power BI provides automated refreshes, incremental refreshes, refreshes with on-premise data (via gateways), and more. Yet, given our privileges my colleagues and I have been met by some challenges with monitoring for quality and performance within Power BI.

The Challenges

1) Email Alerts and Automated Scheduled Refreshes – What happens when a refresh fails? An email is sent to a predefined group email or individual. If your inbox looks anything like mine in a given day, the number of alerts are overwhelming and there is a good chance you may miss a failed refresh. I have also fallen prey to the Focused Inbox feature in Outlook that, by default, label those alert notices as “Other” thereby relegating the important messages into obscurity and allowing latent data to persist in the Power BI reports. Best I can gather is I am not training the Machine Learning model well enough to make my Focused Inbox better, but do I need to? And should I expect my colleagues to do the same?

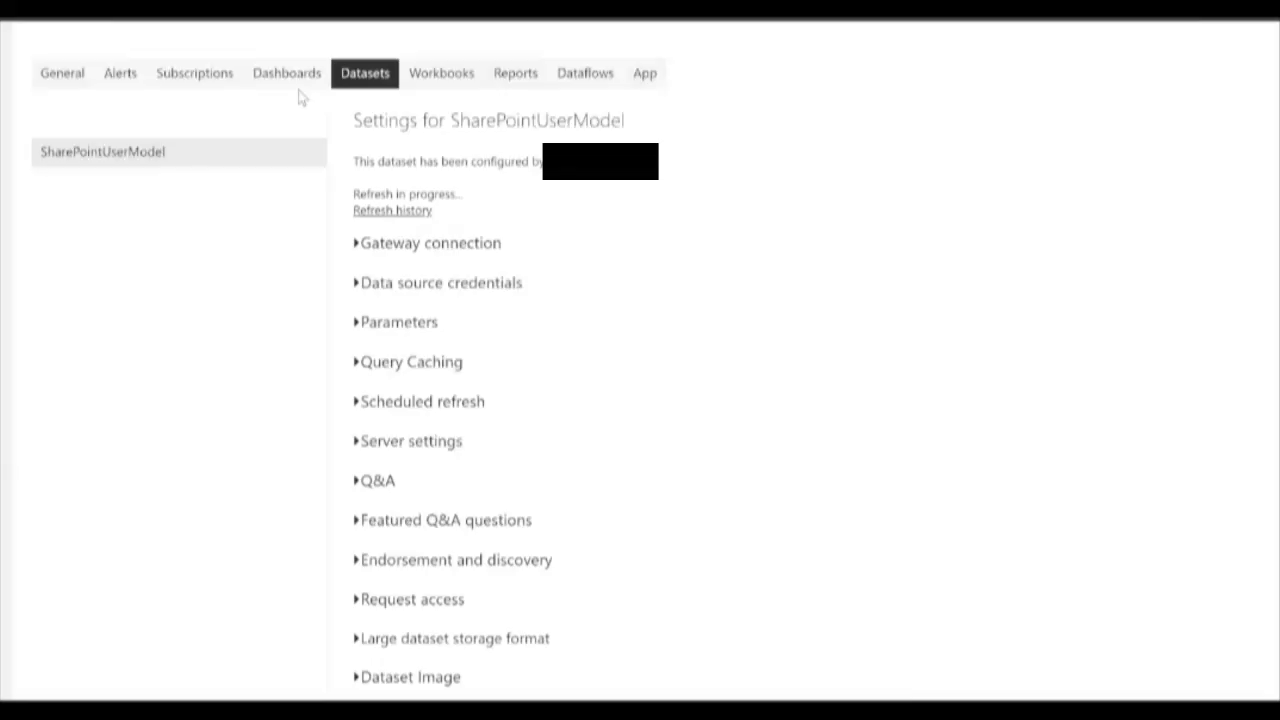

2) Gateways and Deactivating Consecutive Failed Refreshes - As of July 2021, Power BI deactivates the refresh scheduled after four consecutive failures, or the service encounters invalid or expired credentials. In the environments I work within, I am often not the gateway administrator, and I am not the administrator of many of the source systems. If our team has scheduled an automated refresh to run hourly and a database goes down or a gateway has a network issue while we are asleep, there is a good chance I wake up with emails from a client asking why the data has not been refreshed since yesterday. Furthermore, my team has to spend time going through the datasets to see which ones may have been disabled and re-enable them. That is overhead that is not necessarily delivering value.

3) Refresh Time Analytics – Power BI’s Refresh History is helpful for diagnosing refresh failures, but what about the time it takes to refresh? It tells me the start and end times, but I have to figure out how many minutes transpired AND I only get a limited history so I cannot really tell if, for example, 10 minutes is acceptable for refreshing this dataset. And even if I may know that is not acceptable, how does the person covering for me when I want to take a vacation know that is not acceptable? What context do they have to make an informed decision? Finally, and I feel like I am piling on now, why do I have to close and open the Refresh history modal to get the latest status of a refresh in progress? I find our team, clicking that the “Close” button and “Refresh History” link incessantly.

Writing these challenges is a little cathartic for me, but when it comes down to adhering to the principle of “Monitor for Quality and Performance” within Power BI we need to be able to answer the key question:

How do we effectively operationalize the monitoring of refreshes?

Best Practices

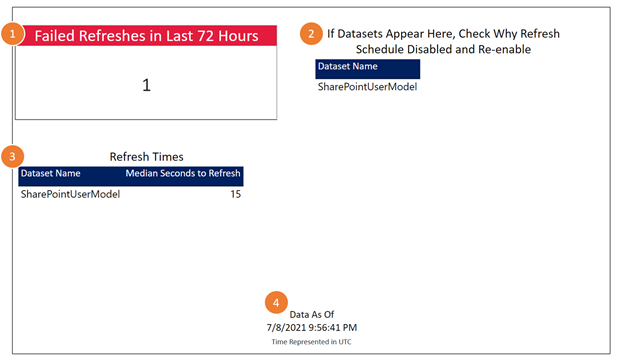

The answer to question 1 is, first and foremost DO NOT rely on emails. I am sure this is shocking to Site Reliability Engineers and DevOps Engineers reading this, but chances are the folks charged with keeping the Power BI reports in good shape will have alert fatigue with email. Instead, designate a person on your team to monitor for failed refreshes. If you are following DataOps principle #5, Daily Interactions, and your refresh schedules can accommodate it, have the designee check right before the daily standup and have it as a 16th minute item (Heather and Greg, you know who you are, that was a good idea on our last project). Moreover, give that designee the right equipment with a Power BI Report to keep an eye on Power BI. Below is a simple report that uses Power BI APIs and it provides the following:

1) A simplified card that indicates if any failed refreshes occurred within the last 72 hours on your workspace. If it is green and 0, we are good. If it is red with a number greater that zero, click on the card and you will see what datasets have issues.

2) A straightforward table that indicates if any datasets listed have had their automate refreshes disabled and, thus, need to be re-enabled. No more manually checking each dataset.

3) A listing of median refresh rates for each dataset. A Power BI novice can set conditional formatting on this visual to turn red if the median time surpasses a concerning threshold.

4) Finally, and most importantly, the report has a “Data As Of” timestamp. You are going to have this report on its own automated refresh schedule. Train those monitoring the refreshes with the report to make sure that timestamp is recent!

I have shared this report template on GitHub at this link. This is a version that works with a service account assigned a Premium Per User license ($240/year and well worth the investment). You will need to update some parameters within Power Query to connect to your workspace correctly and I geared it towards the U.S. Commercial version of Power BI. I added a readme to this folder in the repo that explains about the inner workings and configuration. If you’re interested in a version that works with Power BI Premium (using an app service principal) let me know.

In the meantime, I’d love to hear your thoughts? Have you had similar experiences? Is this helpful? Let me know on LinkendIn or Twitter, please.