Part 35 – Commenting Power Query with Azure OpenAI

Leveraging Artificial Intelligence (AI) to reduce cycle times.

Over the past several years, I've seen a lot of Power Query code. Whether it comes from a dataset a teammate built or I inherited supporting the dataset, understanding Power Query built by others can be challenging.

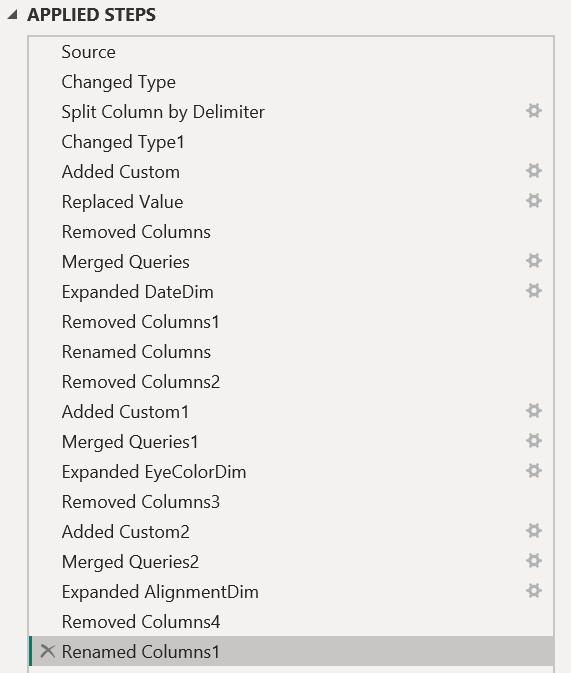

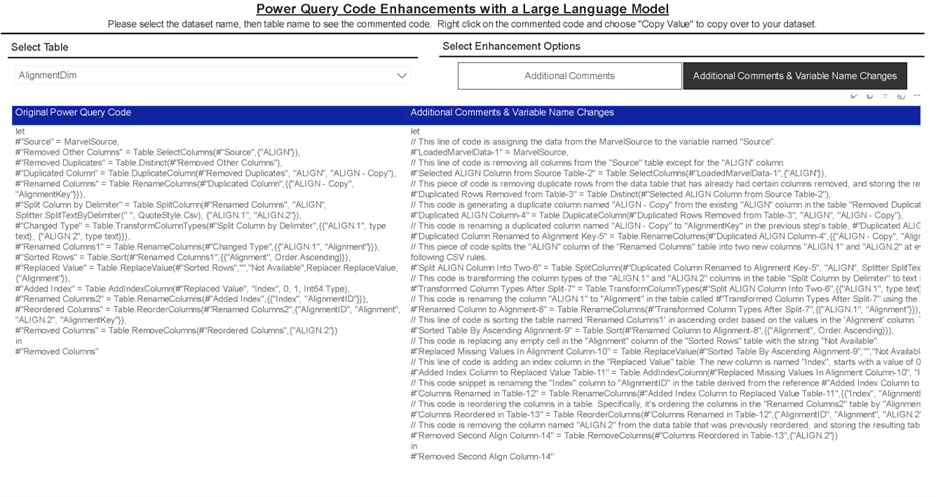

When reviewing the new Power Query code, you may encounter a list of applied steps that look similar to Figure 1:

Figure 1 - Example of Power Query code with default names that provide little context.

What do you do next? Spend the time to click on each step to understand the code, right? However, this solution is time-consuming. For example, if you needed to update a custom column, you would need to slog through a series of manual steps: hunting and clicking through the applied steps to find the appropriate column, deciphering the code in Advanced Editor, copying the code to a text editor, and—finally—searching.

These process inefficiencies add up, leading to slower delivery to customers and poor maintenance. They also represent the antithesis of DataOps, which states, “We should strive to minimize the time and effort to turn a customer need into an analytic idea, create it in development, release it as a repeatable production process, and finally refactor and reuse that product.”

Therefore, to save time and incorporate DataOps principles, I typically ask my teams to emphasize two integral practices:

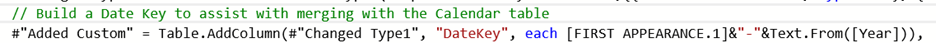

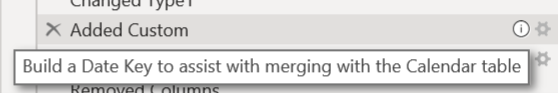

1) Add Comments - For steps involving merges, custom functions, custom columns, or significant complexity, add an explanatory comment before each step via Advanced Editor. These comments provide context and details to reduce the need for interpretation. Figure 2 provides an example of a comment in the Advanced Editor, and Figure 3 demonstrates how that comment appears in the Applied Steps windows.

Figure 2 - Example of a comment added in the Advanced Editor.

Figure 3 - Example of the comment in the Applied Steps panel.

2) Use Descriptive Column Names - Instead of labeling steps with ambiguous names such as “Added Custom Column” or “Renamed Columns8,” make the step more descriptive in roughly 25 to 50 characters. For example, if you created a custom column named “Fiscal Year,” you could rename the step to “Added Fiscal Year.”

If You Can’t Enforce It, Have Artificial Intelligence Do It.

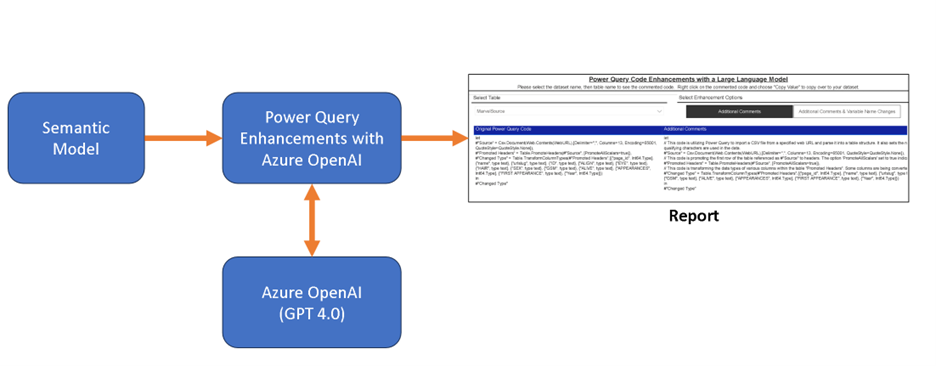

As you may already know, enforcing these practices is difficult. Low-code practitioners and even pro-code developers have been encouraged to comment on their work appropriately since the dawn of programming languages, but few routinely do. This is where AI comes in. Large Language Models can help apply these practices. With ChatGPT available through Azure Open API, I built a Power BI template that could point to a dataset in the Power BI service, parse the Power Query code, and offer a transformed version of each table. Figure 4 provides a high-level overview of the data pipeline.

Figure 4 - High-level overview of the data pipeline using Azure OpenAI.

Figure 5 provides an example. I also have a public version of this report that analyzed a version of a sample dataset (errr…semantic model) I’ve used for demonstrations.

Figure 5 - Screenshot of Power Query Code transformed by ChatGPT 4.0 in Azure OpenAI’s Service.

With this transformed code available, you then could copy and replace the existing code AND test accordingly.

Design

If you’re interested in how this works, the template depends on the following two components:

1) Dynamic Management View Queries - To pull semantic model information, I needed to be able to run dynamic management view queries to pull the Power Query code for the dataset via XMLA. This required a premium per-user workspace for housing the semantic model.

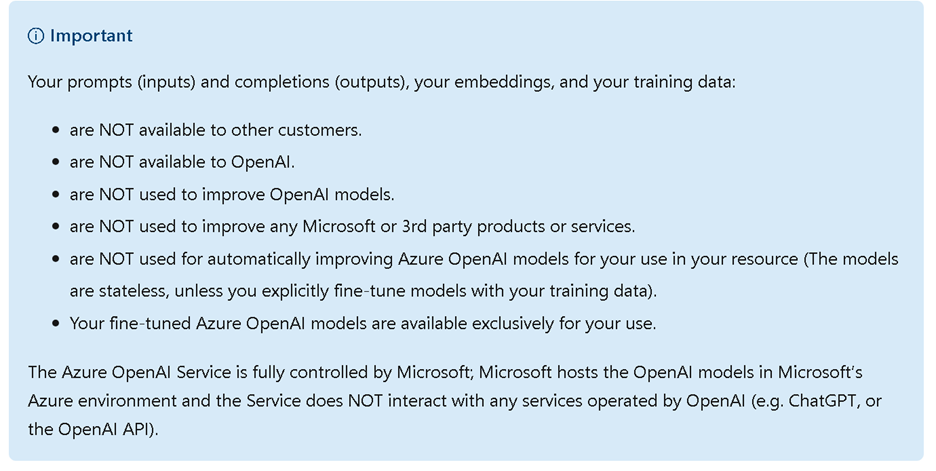

2) Azure Open API - This template depends on you having a subscription to Azure Open API available. I chose Azure Open API for the terms of service and the easier path for authority to operate within my working environments (see Figure 6). To set up an Azure Open API endpoint, please see this article.

Figure 6 - Microsoft’s Azure Open AI statement of data, privacy, and security as of January 6^th^, 2024.

Execution

With those two components in place, the template performs the following:

1) Asks you to identify the Azure Open AI endpoint and key.

2) Asks you to identify the XMLA Endpoint and Dataset Name.

3) Uses the information provided to extract Power Query code from the dataset for analysis.

4) Prompts ChatGPT 4.0 to analyze each step in the Power Query code and applies the two best practices.

5) Transforms Power Query code in each table in the dataset based on Chat GPT responses.

Try It Yourself

If you can use Azure Open AI, give the template a try and let me know what you think. As always, I look forward to hearing from you on LinkedIn or Twitter.

This article was edited by my colleague and senior technical writer, Kiley Williams Garrett.